Editor’s Introduction for May 2022 Special Column

Professor John Hattie is well-known for his research into education and his work on Visible Learning (2008) and Visible Learning for Teachers (2013). I was very fortunate to have him as my mentor and thesis supervisor. A key message that resonated with me from his work is this: ‘Know thy impact’. What this means is that assessment information can help us, as teachers, make crucial decisions on how and where to help our students improve (or progress), and more importantly, evaluate our own approaches to teaching and how best to change our practice.

In May’s Special Column, Dr Soh Shean Han from the Faculty of Dentistry discusses how she made use of pre- and post- assessments to evaluate her intervention, i.e., to ‘know thy impact’.

SOH Shean Han

Faculty of Dentistry

Soh S. H. (2022, May 28). Designing pre- and post- assessments to evaluate an intervention about improving students’ orthodontic treatment planning skills Teaching Connections.

https://blog.nus.edu.sg/teachingconnections/2022/05/27/designing-pre-post-assessments-to-evaluate-an-intervention-about-improving-students-planning-skills-in-orthodontic-treatment/

Introduction

Orthodontic treatment planning is difficult for undergraduate dental students to grasp because of the high intrinsic cognitive load. Development of an appropriate treatment plan requires a holistic consideration of multiple factors beyond teeth. Moreover, getting the right diagnosis is a prerequisite to developing an appropriate treatment plan.

Given these challenges, I needed to know the impact of my learning intervention—an attempt to increase the students’ orthodontic treatment planning skills through the discussion of case studies (see Appendix for details about the learning intervention). Pre- and post-assessments were useful in achieving this goal through comparing the scores (quantitative) and written responses (qualitative), across both time points, while taking into account my students’ baseline knowledge.

The following were factors that I considered in the assessment design.

Case Complexity and Number

To enhance the assessments’ validity, the case type and complexity closely matched those in the learning goals. While a larger number of cases could assess the student learning with greater validity and reliability, it would also result in assessor and student fatigue. Hence, only one case of each case type was incorporated: 1) Interceptive (early) treatment, 2) non-surgical comprehensive treatment, 3) combined orthodontic and orthognathic surgical treatment.

Similarity of Assessments at Both Time Points

The pre- and post-assessments could comprise the same or a different question set. I opted for similar assessments which would allow a more accurate comparison of student performance across both time points, without the confounding factor of question complexity. It has the added benefit of less time needed to develop two closely matched question sets.

Question Type

There is a plethora of question types that could be used, each with its pros and cons.

Close-ended e.g. true/false or multiple-choice questions (MCQs) are easy to grade with automated grading enabled in most digital assessment systems. However, there is a 20-50% probability (depending on the number of options) that students can select the correct answer based on sheer “guesswork” alone, especially if distractors are poorly formed. It does not simulate real clinical settings, in which clinicians do not have access to options to guide their management of patients.

Conversely, open-ended e.g. short answer or essay questions are easier to set, but labour-intensive when it comes to grading.

I opted for a combination of question types. Short-answer questions were chosen when it came to assessing the students’ rationale for their decisions. To ensure the questions had sufficient granularity to distil students’ knowledge deficiencies and misconceptions, the students were always asked to explain the rationale for their decisions. This qualitative response provides tutors with useful information on students’ learning as well as feedback to improve the content and delivery of subsequent classes.

Question Sequencing

As the correct diagnosis was paramount to deriving the correct treatment plan, it was important to assess the student’s diagnosis along with the treatment plan for each case. This would help tutors elucidate if the wrong treatment plan was due to an incorrect diagnosis, or poor understanding of treatment planning concepts, or both.

Scoring Criteria and Weightage

These are important factors in assessment design if one is determining a learning activity’s efficacy solely from the quantitative comparison of scores.

On the scoring criteria, one should deliberate if the student provides the correct treatment plan, but fails to provide the correct rationale, will he still be credited for the former? On weightage, how should marks be allocated between the two question parts, for the final score to accurately represent students’ understanding of the concepts? Regardless, the final quantitative score should always be interpreted with caution. A closer scrutiny of the scores from each question component will be required for deriving constructive feedback for teaching.

My preference is to allocate equal marks to both question parts, with grading of both components being interdependent. If the student is unable to provide the correct rationale for the treatment plan, she/he will be denied credit for the entire case.

Case Illustration

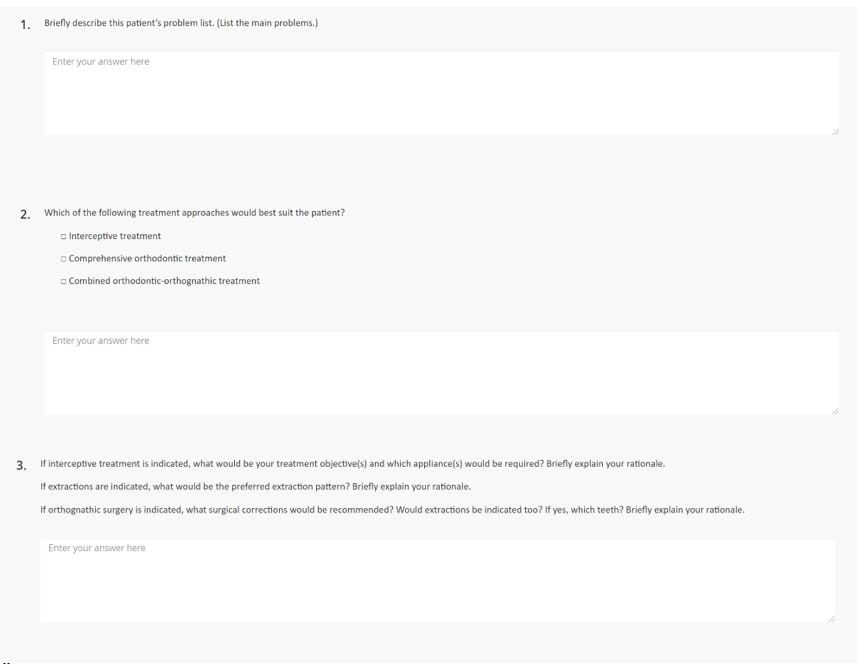

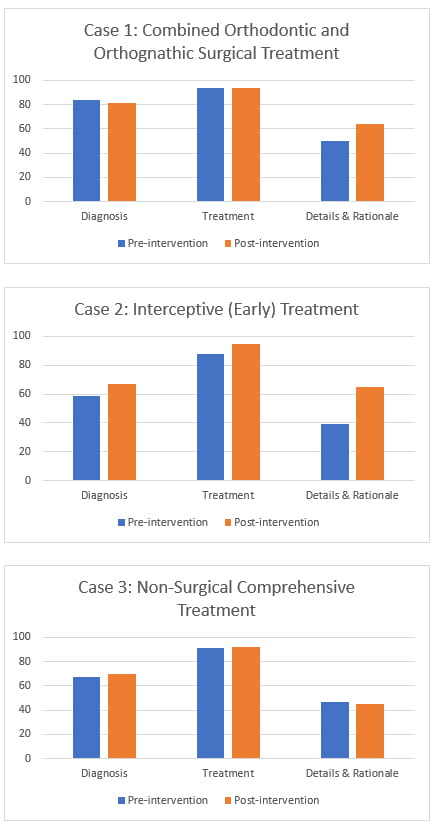

Figure 1 shows an example of the question set. Figure 2 demonstrates a simulated comparative analysis of pre- and post-assessment scores. Refer to Appendix for details on case illustration.

Figure 1. An example of the question set in the pre-post-assessments.

Figure 2. A comparative analysis of the pre- and post-intervention scores.

In conclusion, with thorough consideration of the assessment design, pre- and post-assessments can provide teachers with invaluable insights into students’ learning.

|

SOH Shean Han is a Senior Lecturer at the Faculty of Dentistry. She teaches orthodontics to undergraduate and graduate students in the Faculty. Her research interests include topics in orthodontics and dental public health. Shean Han can be reached at denssh@nus.edu.sg. |

Appendix

The intervention was a case-based learning tutorial on orthodontic treatment. The assessment comprised three cases. Each case had three questions, as illustrated in Figure 1.

Comparing the pre- and post-intervention scores

The high pre-intervention scores in the diagnosis section (Question 1) across all three cases indicates that the poor pre-intervention performance in treatment justification was not attributed to a lack of diagnostic competence, but rather a lack of understanding of treatment planning concepts.

The lack of significant improvement in the treatment scores (Question 2) across the two time points in all three cases is not unexpected, as Question 2 was close-ended. Moreover, with only three options to select from, there was a lower threshold to scoring full marks for the question.

Case 1: There was no significant improvement in the students’ diagnosis and treatment scores (Questions 1 and 2), but there was a significant improvement in their ability to provide details on their chosen treatment plan and explain the rationale for their choice.

Case 2: There was an improvement in student performance across all three questions, with a substantial improvement in the students’ ability to justify their treatment plan.

Case 3: Similar to Case 1, there was no significant improvement in the students’ diagnosis and treatment scores. In contrast to the other two cases, there was no improvement in the students’ performance in their ability to explain their treatment choice. A qualitative evaluation of the students’ answers would provide greater insights into the reason(s) for the lack of improvement. This could be attributed to poor case selection (extreme difficulty or inadequate information provided), sub-optimal in-class delivery of concepts, or the inherent complexity of this domain due to the wide plethora of options available for non-surgical orthodontic treatment.

The comparative analysis illustrates the usefulness of this learning intervention in enhancing the students’ orthodontic treatment planning skills, except in cases involving non-surgical comprehensive treatment.